The previous prototype had all the functionality of Gzweb, except translate and rotate modes. This week, I’ve added translate and rotate manipulators controlled by touch. Check out the result on the video, and read on to find out what’s going on.

3D model manipulation

Translation and rotation in 3D space using a 2D touch screen poses a challenge. The video below shows some possible interactions on a big touch screen. Keep in mind that mobile devices have small screens, so it is best if interaction is performed with at most 2 fingers.

Manipulation in Gzweb is done with the help of TransformControls.js by arodic. This is a Three.js class which can do basically anything related to transforms: 7 kinds of translations and 5 kinds of rotations – on local or world coordinates – and scaling uniformly or by axis. A lot. All pretty focused on desktops though.

TransformControls is pretty “involved,” as is pointed out in this issue (still open). So I decided to simplify things before implementing touch interactions, as follows (Still not sure if it was a good idea though):

- Strip it down to its fundamental bits;

- Understand well what’s going on;

- Implement whatever touch interactions are needed;

- If I need fancy stuff later, I can build upon that.

That’s how I got rid of:

- Local mode, so now there are only transforms with respect to the world frame.

- TXYZ, RE, RXYZ transforms.

- Scaling transforms.

- displayAxes: before, there were hidden pickerAxes bigger than the more elegant displayAxes. So you had a big area to select, but only thin lines would show on the screen. Now the handles you pick are the same that are displayed.

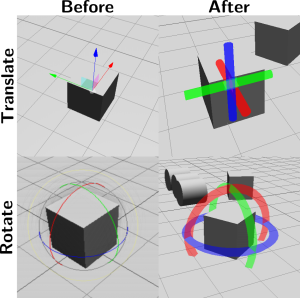

I also reshaped the picker axes. That’s why the looks are so different from before, check it out:

Mode selection

On Gzweb, the mode selection happens through buttons (remember? I added a rotate button 😀 ). There are 3 modes: view (camera control), translate and rotate. On a mobile interface it is very important to keep the visual clean with as few buttons as possible. So I’m trying something out: changing modes by tapping/clicking an object. Tapping an object alternates between translate and rotate modes. Tapping another object brings the controls to that object. Tapping on no object brings us back to camera control.

Rotate controls

The rotate controls are pretty much the same as before, but now work with touch as well. You first select the axis you want to manipulate by tapping/clicking on it, then drag your finger/mouse around the circle to rotate. It turned out that making the manipulators thicker simplified the code and made it more natural to tap onto than skinny lines. I’m not sure about the looks though, what do you think?

Translate controls

I could have done the same with the translation, but I took this chance to try something different. I wanted to get used to handling multi-touch, so I implemented something like the “Horizontal-Z” translation shown on the video above. The first touch translates the object on the XY plane (horizontal). The second touch controls the Z height. I haven’t implemented it very well yet though, so it works better from some angles than others.

Usability Tests

OSRF has some nice guidelines to improve the usability of new features. As I coded, I was doing some “quick and dirty” tests (as Steffi would say) with people around me and got some nice feedback which directed me a bit. For example:

- A user didn’t understand what to do after selecting a simple shape to be inserted. Now the panel closes the moment a shape is selected, instead of having to be manually closed, revealing the object.

- A user couldn’t understand why sometimes touch was controlling the camera and other times it was controlling the object. I added a mode indicator to the header so it becomes clearer. The indicator may be mistaken for a button though, this needs to be tested with more people. Actually the whole selection control must be tested.

Some other issues haven’t been dealt with yet, for example:

- Although going to translate is straight forward, it’s not clear that a second click will go to rotate.

- The translate method is not intuitive, but after explanation it can be understood.

- When translating, the finger might cover the object, making it hard to see where it’s being placed.

Now that all functionality is there, it’s about time to start conducting usability tests with more people and get more feedback. I’ll be sharing my Usability Test Protocol with you soon. I’ll also be implementing alternative versions with different features to ask users which they prefer.

Documentation

This week I also started documenting Gzweb Mobile, in two ways:

JSDoc is an inline documentation generator for JavaScript. The latest files on Gzweb Mobile already have some comments on them (at the moment mostly for my own benefit though). By the end of the internship I’ll have made proper documentation for both Gzweb and Gzweb Mobile.

I’ve also started a page for Gzweb Mobile on the Gazebo wiki. I’m trying to keep it straight to the point though, so I’m not writing boring details like here on the blog 😛